505: quantum of sollazzo

#505: quantum of sollazzo – 14 February 2022

The data newsletter by @puntofisso.

Hello, regular readers and welcome new ones :) This is Quantum of Sollazzo, the newsletter about all things data. I am Giuseppe Sollazzo, or @puntofisso. I’ve been sending this newsletter since 2012 to be a summary of all the articles with or about data that captured my attention over the previous week. The newsletter is and will always (well, for as long as I can keep going!) be free, but you’re welcome to become a friend via the links below.

We have more sponsored content by Ed Freyfogle, organiser of location-based service meetup Geomob, co-host of the Geomob podcast, and co-founder of the OpenCage, who has offered to introduce a set of points around the topic of geodata. His first entry starts a few paragraphs below on geocoding at scale.

The most clicked link last week was the Economist’s article on authoritarian economies.

If you’re reading this: what would you like me to create next?

‘till next week,

Giuseppe @puntofisso

|

Become a Friend of Quantum of Sollazzo from $1/month → If you enjoy this newsletter, you can support it by becoming a GitHub Sponsor. Or you can Buy Me a Coffee. I'll send you an Open Data Rottweiler sticker. You're receiving this email because you subscribed to Quantum of Sollazzo, a weekly newsletter covering all things data, written by Giuseppe Sollazzo (@puntofisso). If you have a product or service to promote and want to support this newsletter, you can sponsor an issue. |

✨ Topical

Which food is better for the planet?

There’s a lot of good interactivity and data in this article on the Washington Post, allowing readers to compare meal choices.

Density maps

Geographic data legend Alasdair Rae shares a few maps, with links to data, using recently released Eurostat 1km grid squares.

The State of Open Humanitarian Data 2023

A report by the UN’s Humanitarian Data Exchange.

A tale of love, woe, and data vis

Elliot Bentley of DataWrapper tells his painful story of waiting for his spouse’s visa, looking at the data angle.

Skyfall

Reuters has been “tracking the asteroids that menace Earth”, with some concerning patterns showing.

🛠️📖 Tools & Tutorials

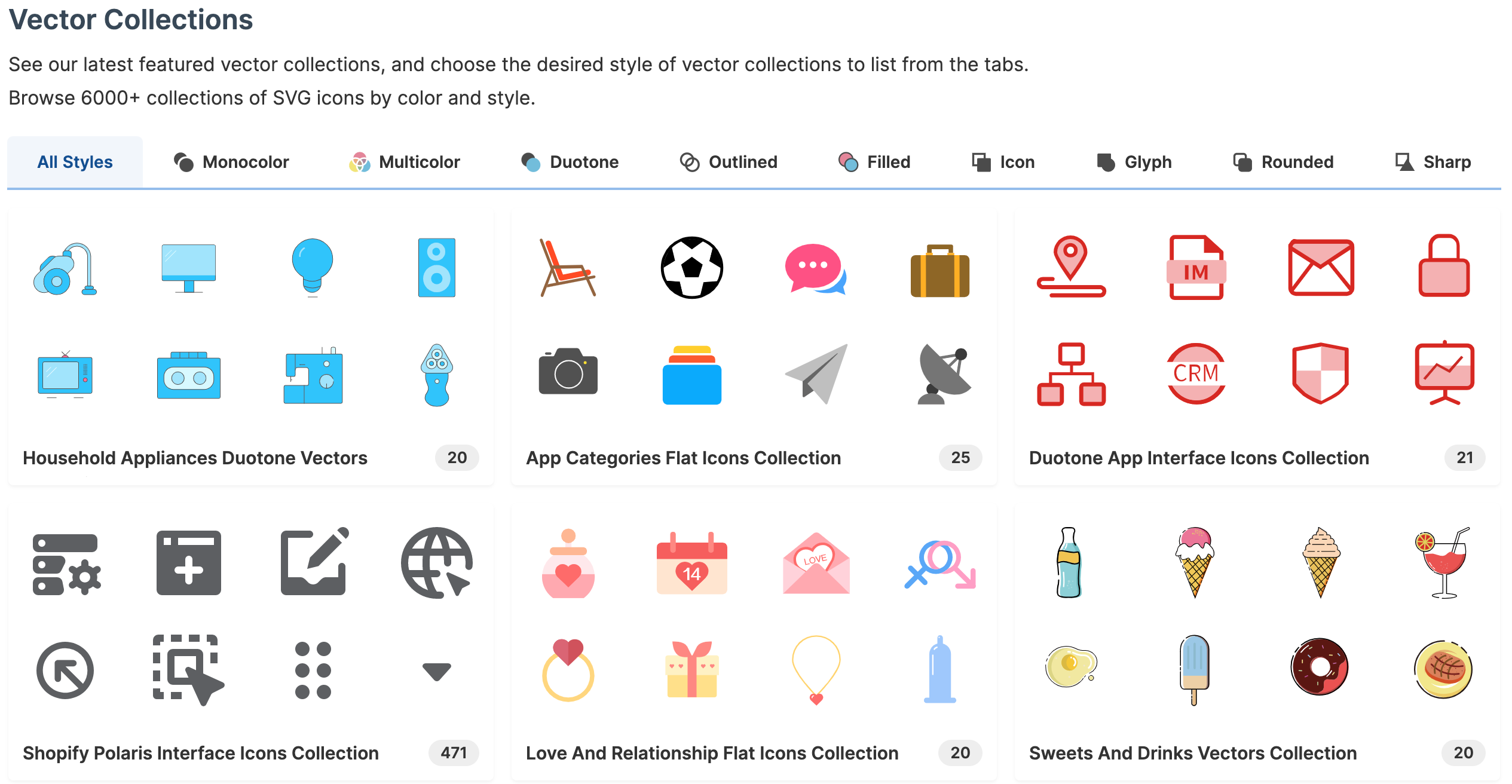

SVG Repo

“500.000+ Open-licensed SVG Vector and Icons”, including for example Facebook.

Springer has released 65 Machine Learning and Data books for free

“Hundreds of books are now free to download”.

Writing ontologies with ChatGPT

Dave Reynolds at Epimorphics: “ChatGPT has a surprisingly good knowledge of linked data and RDF and is able to synthesise examples of RDF data and SPARQL queries. Inspired by this we’ve been exploring the use of ChatGPT in modelling and ontology design. How close is it to being a useful assistant?“

Generative AI for News Media

A Colab notebook, aiming “to provide an overview and some examples of how generative models such as GPT-3 can be used for productive purposes in the domain of journalism” by journalism professor Nick Diakopoulos.

GIJN’s Updated Guide to Planespotting and Flight Tracking

The guide to planespotting is here, while the reasons for the changes are explained in this blog post.

OSINT Tools Library

“A constantly updated list of web-based OSINT tools and techniques from across the open-source intelligence community, curated by Flashpoint.“

Geography

This is a quirky page that looks like a 1990s website, but has a few interesting pointers to concepts in maps and geo on the web.

GPT in 60 Lines of NumPy

“In this post, we’ll implement a GPT from scratch in just 60 lines of numpy. We’ll then load the trained GPT-2 model weights released by OpenAI into our implementation and generate some text.“

Geocoding at scale

In our final installment in our series about using open data for geocoding we contemplate the challenges of geocoding at scale. What are the issues you face when you have many hundreds of thousands or even millions of coordinates or addresses to work on daily? At OpenCage we serve numerous customers in this category, and a common question that comes up is whether an API based solution can handle that type of scale.

An API-based solution, managed by experts, is almost always the most reliable and most affordable way to develop such an on-going system, as otherwise you will soon be spending a lot of valuable developer time making sure your geodata is staying current. As anyone who has worked with software can confirm: “Building is easy, maintaining is hard”.

Nevertheless, there are challenges that come with depending on any external service, one of course being network availability. At OpenCage we have multiple, fully-redundant data centers, and the availability of our service is independently and publicly monitored by a third party (current and past operational status can be seen at status.opencagedata.com).

Still, even with a highly-available service, some customers worry about the “cost” of crossing the internet to an external service. The fastest API query is the one you don’t even make; a smart caching strategy can go a long way to reducing usage. Because our geocoding API is built on open data you can cache the results as long as you like, and we’ve published a few tips and points to consider.

We hope you’ve enjoyed our series on the issues around geocoding with open data. While we’ve used our service as the example, we believe many of the concepts and considerations will apply regardless of the data processing tools and services you are building on. If you have questions regarding anything we discussed, please get in touch.

Have a project that will need geocoding? See our geocoding buyer’s guide for an overview of all the factors to consider when choosing between geocoding services.

🤯 Data thinking

Machine Unlearning

Interesting thread by Bart (thanks for ccing me!) that shares a truly interesting academic paper and asks an intriguing question: “will machine unlearning become a thing, and will the right to be forgotten become the right to unlearn a model?“

Our Big Mac index shows how burger prices are changing

The Economist: “In July 2022 we updated the Big Mac index to use a McDonalds-provided price for the United States. We also changed our methodology for how we calculate the GDP-adjusted index, the full history of which will now be adjusted whenever the IMF’s historical GDP series are updated. The previously published versions of both indices are available in our archive.“

A Better Path Toward Criticizing Data Visualizations

Jon Schwabisch: “Broadly, data visualization criticisms allow practitioners to further the field, explore new approaches and new dimensions, and understand what works and what doesn’t for different audiences, platforms, and content areas. But the current approach to data visualization critique doesn’t achieve these lofty aims, instead it often stoops to derision or defers to rigidity, which does little to move the field forward.“

📈Dataviz, Data Analysis, & Interactive

Popes who were murdered, per century

Matt Allinson often has the best dataviz ideas. Read the note at the bottom, please.

US Aquifer Database

A good source, nicely visualized by the University of California, Santa Barbara.

(via Jeremy Singer-Vine’s Data Is Plural)

OneSoil

“The First Interactive Map with AI-Detected Fields and Crops“

Tessuto normativo italiano

A representation of Italian laws. Each circle is a law, and the dataviz here has basically iterated on each law’s legal references, until there were no more.

Two First Serves: Analyzing ATP Service Data from 2000–2020

“Which players on the tour should get rid of their second serve and why (and a Dash app to visualize it)“

“4-in-5 players are better off maintaining their traditional first-second-serve strategy. However, for the remaining 1-in-5 players, especially players whose height exceeds 6 feet 4 inches (193cm), I would encourage trying out a two-first-serve strategy (although, I might not wait for a tournament final to give it a go). Players who can demonstrate exceptional ability to win their points on a first-serve might benefit from pressuring their opponents with a two-first-serve strategy, even at the risk of double faulting more often.“

Country income comparator

Created by Lindsey Poulter for the World Data Visualization Prize, this website allows you to compare incomes of individual and grouped countries.

Livable cities’ urban networks

“As a primary goal of today’s urban planning is to design livable, future-proof cities via concepts like the 15-minute city, here I collect the top lists of most livable cities and give a visual overview of their road networks – with a ChatGPT twist.“

Ok, you know I’m a fan of city maps, and this blog adds the little twist of making ChatGPT create palettes of colour for them.

Charts show University of California admissions rates for every public high school in state

“Each circle is a public high school, sized by the number of seniors in 2020-21 and positioned by the school’s UC application and acceptance rates in 2021. Hover or tap on a circle for details.“

Dataviz by the San Francisco Chronicle.

Where Else You Can Work

FlowingData’s Nathan Yau: “If you’re searching for a new job, it’s worth looking in different industries — instead of doing more of the same elsewhere, or in the other direction, switching to a completely new occupation. Maybe your current industry is saturated, but a different industry might require your skills.

The searchable chart below shows the industries that people work in, given a specific job”, based on US statistics.

🤖 AI

Stable Attribution

“By returning attribution, it’s possible to proportionally assign credit to human artists in every image generated by A.I. This opens up the possibility of real collaboration, on ethical terms.

…

Version 1 of Stable Attribution’s algorithm decodes an image generated by an A.I. model into the most similar examples from the data that the model was trained with. Usually, the image the model creates doesn’t exist in its training data - it’s new - but because of the training process, the most influential images are the most visually similar ones, especially in the details.

The data from models like Stable Diffusion is publicly available - by indexing all of it, we can find the most similar images to what the model generates, no matter where they are in the dataset.“

Pathwai

“Our pathway to Artificial General Intelligence visualized over 25 years.“

quantum of sollazzo is supported by ProofRed’s excellent proofreading. If you need high-quality copy editing or proofreading, head to http://proofred.co.uk. Oh, they also make really good explainer videos.

Supporters* casperdcl and iterative.ai Jeff Wilson Fay Simcock Naomi Penfold

[*] this is for all $5+/months Github sponsors. If you are one of those and don’t appear here, please e-mail me