Notes on Python 3.12, new matplotlib features and some new open source tools

Notes on Python 3.12, new matplotlib features and some new open source tools

Further below are 7 jobs including Senior and Research Data Science and Data Engineering positions at companies like (the very lovely) Coefficient Systems, Ocado and the Metropolitan Police

We had a great PyDataLondon meetup last week including a talk on deep learning for tabular data (it still isn’t beating GBTs) and a talk by my friend Nick on building cross-functional teams.

He talked about turning ML project design sessions from defining hard (and possibly-impossible) questions like “we shall decrease churn by 5%” into derisking questions like “can we understand what drives churn such that we might decrease it in a later iteration”, to remove the risk of being pinned to a hard metric before any work is done. He also spoke on defining both “progression criteria” (to figure out if you’ll keep iterating) and “completion criteria” (I have a “definition of done” in my Success course) so you know when you can stop and add value to the organisation.

Finally he talked about having a dual track for discovery work and for delivery work - both working at uneven speeds with backlogs for both. This means that DS can work on interesting ideas that are risky whilst on the engineering track - which probably includes the same DS members - systems are operationalised and improved with lower risk and more predictable timelines. I quite like the idea of separating two distinct tracks and baking that in to discussions early on in teams.

The CfP is open for PyDataLondon 2023 conference on June 2-4. You should get your talk proposals in and think about whether your organisation will want to sponsor to get in front of great DS and DEng candidates. I’ll run another of my Executives at PyData discussion sessions alongside the usual wide range of talks and workshops.

Pete Bleackley of PyDataLondon shared a thoughtful short write-up on the future of NLP noting “they’re good at producing fluent text, they don’t necessarily produce accurate or useful text” with thoughts on fake news detection and claim verification.

Python Feature Engineering, 2nd ed

I’ve started to read the 2nd edition of Soledad Galli’s Python Feature Engineering book. It contains chapters on standard topics like imputing missing data and outlier detection through to date/time feature extraction, time series feature generation and feature synthesis using ML tools and it also uses Soledad’s Feature Engine open source library.

I’ll write more on this in the next issue. So far I can see that the book is well written, with clear explanations and is supported by lots of code samples and diagrams. It is looking pretty good.

New courses - Software Engineering and Higher Performance

On April 12-14 with Zoom I’ll run my Software Engineering for Data Scientists course - you should attend if you need to write tests and move from Notebooks to building maintainable library code.

On May 3-5 with Zoom I’ll run my Higher Performance Python course - you should attend if your scientific code is slow and scales poorly. We’ll cover profiling so you know what’s slow, then we’ll cover all the ways to make it faster including speeding-up Pandas and scaling to bigger-than-Pandas datasets.

My new Project Design for Data Science course will be listed on my training page once I’ve got a date ready - this is an update on my Success course with a more workshop-focused process to solve the problems leaders are facing right now.

Reply directly to this email if you’ve got questions.

Higher Performance - Python 3.12a4 and faster numpy sorts

Python 3.12 alpha4 (just a little bit of news)

There’s not a huge amount to say right now, I’m pretty excited about what 3.12 will bring as it’ll include a Just In Time compiler in the core language. We may be able to get rid of some Cython (C-in-Python) compiled code from some libraries, keeping the speed benefit but just by using pure Python for easier debugging.

The Phoronix news site has a little update on 3.12 noting that SyntaxError and ImportError messages are more informative, but that’s all for now. Each of the recent releases has shown some nice productivity enhancements with better exception messages.

Linux perf support is being added which means we’ll be able to go really low-level and trace Python functions using the perf command, watching how the functions interact with system libraries and external tools. This will bring in a whole new level of profiling, for those who are stretching the limits of Python.

I’ve talked before about the enhancements in Python 3.11 including faster execution (circa 40% for math operations) and better exception messages.

Numpy gets faster sorts on recent Intel chipsets

Phoronix reports that on very recent Intel CPUs (Tiger Lake from 2020+) a new SIMD-based sort function was made available and has been added to Numpy.

The pull request notes:

16-bit int sped up by 17x and float64 by nearly 10x for random arrays. Benchmarked on a 11th Gen Tigerlake i7-1165G7.

The pull request lists circa 35 speed tests before & after the change showing from minor to huge speed-ups for int16, int32, int64, float32, float64 and (in the midst of the PR) seemingly float16 (which typically are interpreted in the silicon). If sorting is important to you and you have a recent Intel CPU, you’ll want to keep an eye out for the next numpy release.

Numba not quite yet on Python 3.11

Python 3.11 was released a few months ago and contains nice speed improvements and more useful exception messages, generally I use it for new projects. This afternoon whilst working on a speed investigation for a client on sklearn I tried to use Numba and was surprised to find it wouldn’t install. It turns out that numba will be 3.11 compatible hopefully in a few weeks, since the compiler hooks deeply inside Python and since 3.11 and 3.12 will have a lot of speed tweaks, more work than usual is going in to making it compatible.

The solution of course was to downgrade to 3.10 for this investigation. I might have an observation to share on using a profiler on sklearn for the next newsletter but frustratingly I think this particular speed test won’t go anywhere as the underlying bottlenecks are coded in Cython already.

Open source

Open source tools from hedge-fund Man Group

I got to speak at a private event at Man Group recently and whilst there they talked about some of the tools they’ve open sourced over the years. They’re a lovely company who are long-time supporters of our PyDataLondon meetup and the wider community, including running public hackathons. Some of the tools I see include:

- Notebooker productionises Jupyter Notebooks as soon as they’re committed to git, then runs them and stores results back to MongoDB with an interactive search tool

- dtale I’ve noted before is a tool like the more common

pandas-profilingwhich performs quick EDA on your DataFrame (using Flask and React) which started life as a stepping stone from SAS’sinsighttool. live demo, 3d scatter demo and securities over time all in the Notebook - PyBloqs is a flexible framework for visualizing data and automating the creation of reports - the “blocks” can be text, tables from a DataFrame, matplotlib charts or more and then they’re assembled and skinned and available for export including to PDF

- AutoPlot “facilitates the quick and easy generation of interactive time series visualisations in JupyterLab”

Do you have any libraries or processes to share that make this kind of data clean-up easier? I’d happily take a look if you’d reply to this email with some links.

Matplotlib 3.7.0 released

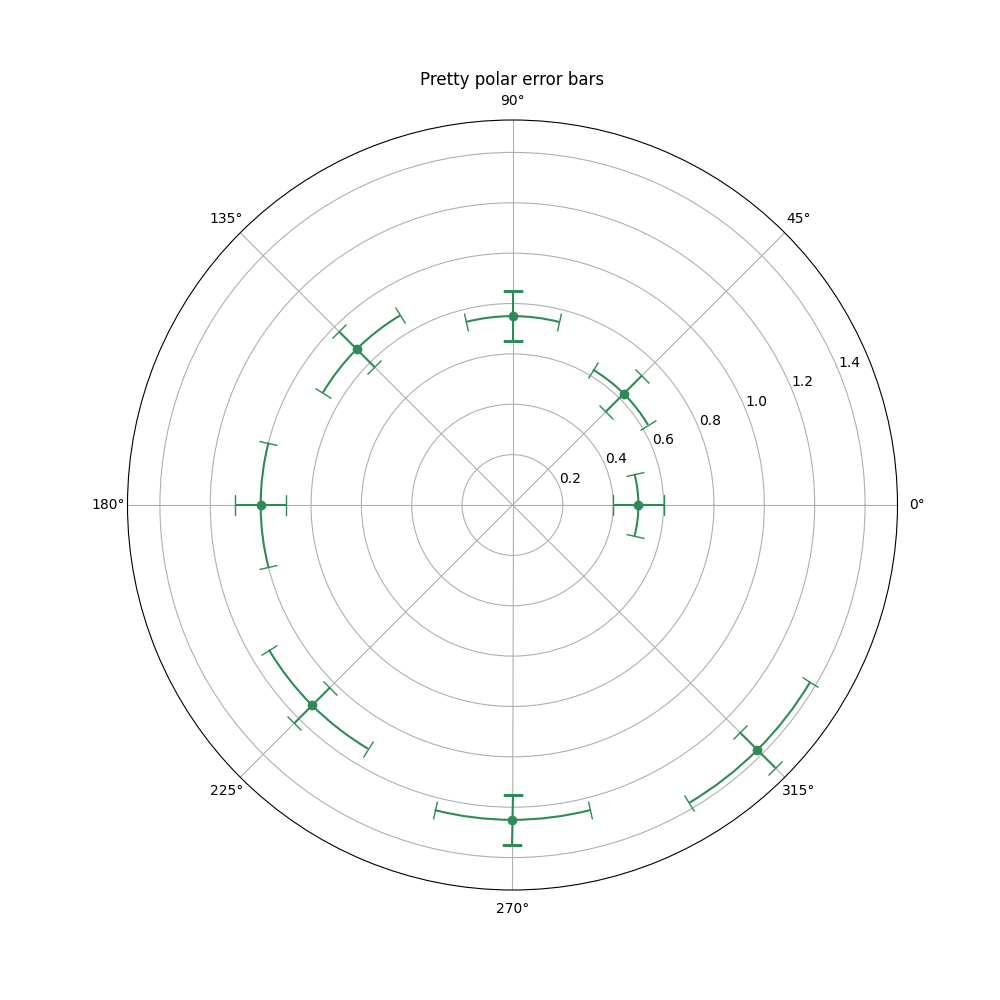

The latest release notes include a pretty hatch option for pie-charts (if pie-charts are your thing - they’re rarely mine), nice error bars on polar plots and lots more:

Bar labels can take format-string specifiers or callables like lambda functions, legends can go outside a figure for a constrained_layout and colorbars can take a location argument:

Footnotes

See recent issues of this newsletter for a dive back in time. Subscribe via the NotANumber site.

About Ian Ozsvald - author of High Performance Python (2nd edition), trainer for Higher Performance Python, Successful Data Science Projects and Software Engineering for Data Scientists, team coach and strategic advisor. I’m also on twitter, LinkedIn and GitHub.

Now some jobs…

Jobs are provided by readers, if you’re growing your team then reply to this and we can add a relevant job here. This list has 1,500+ subscribers. Your first job listing is free and it’ll go to all 1,500 subscribers 3 times over 6 weeks, subsequent posts are charged.

Data Scientist

M&G plc is an international savings and investments business, as at 30 June 2022, we had £348.9 billion of assets under management and administration.

Analytics – Data Science Team is looking for a Data Scientist to work on projects ranging from Quantitative Finance to NLP. Some recent projects include: - ML applications in ESG data - Topic modelling and sentiment analysis - Portfolio Optimization

The work will revolve around the following: - Build data ingestion pipelines (with data sourced from SFTP & third party APIs) - Explore data and extract insights using Machine Learning models like Random Forest, XGBoost and (sometimes) Neural Networks - Productionize the solution (build CI/CD pipelines with the help of friendly DevOps engineer)

- Rate:

- Location: London

- Contact: sarunas.girdenas@mandg.com (please mention this list when you get in touch)

- Side reading: link

Senior Data Scientist at Coefficient Systems Ltd

We are looking for an enthusiastic and pragmatic Senior Data Scientist with 5+ years’ experience to join the Coefficient team full-time. We are a “full-stack” data consultancy delivering end-to-end data science, engineering and ML solutions. We’re passionate about open source, open data, agile delivery and building a culture of excellence.

This is our first Senior Data Science role, so you can expect to work closely with the CEO and to take a tech lead role for some of our projects. You’ll be at the heart of project delivery including hands-on coding, code reviews, delivering Python workshops, and mentoring others. You’ll be working on projects with multiple clients across different industries, including clients in the UK public sector, financial services, healthcare, app startups and beyond.

Our goal is to promote a diverse, inclusive and empowering culture at Coefficient with people who enjoy sharing their knowledge and passion with others. We aim to be best-in-class at what we do, and we want to work with people who share that same attitude.

- Rate: £80-90K

- Location: London/remote (we meet in London several times per month)

- Contact: jobs@coefficient.ai (please mention this list when you get in touch)

- Side reading: link, link, link

(Senior) Data Scientist, Ocado Technology, Permanent, Hatfield UK

Ocado technology has developed an end-to-end retail solution, the Ocado Smart Platform (OSP) which it serves a growing list of major partner organisations across the globe. We currently have three open roles in the ecommerce stream.

Our team focuses on machine learning and optimisation problems for the web shop - from recommending products to customers, to ranking optimisation and intelligent substitutions. Our data is stored in Google BigQuery, we work primarily in Python for machine learning and use frameworks such as Apache Beam and TensorFlow. We are looking for someone with experience in developing and optimising data science products as we seek to improve the personalisation capabilities and their performance for OSP.

- Rate:

- Location: Hatfield, UK

- Contact: edward.leming@ocado.com (please mention this list when you get in touch)

- Side reading: link, link, link

Request for proposals – Database Integration Project

Global Canopy is looking for a consultancy that will work with us on the second phase of development of our Forest IQ database. This groundbreaking project brings together a number of leading environmental organisations, and the best available data on corporate performance on deforestation, to help financial institutions understand their exposure and move rapidly towards deforestation-free portfolios.

- Rate: The maximum budget available for this work is NOK 1,000,000 (including VAT)., approximately £80,000 GBP.

- Location: Oxford/Remote

- Contact: tenders@globalcanopy.org (please mention this list when you get in touch)

- Side reading: link

Machine Learning Researcher - Ocado Technology, Permanent

We are looking for a Machine Learning Scientist specialised in Reinforcement Learning who can help us improve our autonomous bot control systems through the development of novel algorithms. The ideal candidate will draw on previous expertise from academia or equivalent practical experience. The role will report to the Head of Data in Fulfilment but will be given the appropriate latitude and autonomy to focus purely on this outcome. Roles and responsibilities will include:

- Rate:

- Location: London | Hybrid (2 days office)

- Contact: jonathan.trillwood@ocado.com (please mention this list when you get in touch)

- Side reading: link, link

Analyst and Lead Analyst at the Metropolitan Police Strategic Insight Unit

The Met is recruiting a data analyst and a lead analyst to join its Strategic Insight Unit (SIU). We are looking for people who are keen on working with large datasets using Python, R, SQL or similar software. The SIU is a small, multi-disciplinary team that combines advanced data analytics and social research skills with expertise in operational policing to empirically answer questions linked with public safety, good governance, and the effectiveness of policing methods.

- Rate: £32,194 to £34,452 (Analyst) / £39,469 to £47,089 (Lead Analyst), plus location allowance

- Location: Hybrid, at least one day per week at New Scotland Yard, London

- Contact: tobias.katus@met.police.uk (please mention this list when you get in touch)

- Side reading: link, link

Web Scraper/Data Engineer

Looking to a hire a full time Web Scraper to join our Data Engineering team, scrapy and SQL are desired skills, as a bonus you’ll have an interest in art.

- Rate:

- Location: London, Remote

- Contact: s.mohamoud@heni.com (please mention this list when you get in touch)

- Side reading: link